I clicked "allow all" on three things this week. A note-taker that wanted my calendar. A coding agent that wanted my GitHub. A research tool that wanted my Drive. Each one threw up the same Google consent screen, the same ten checkboxes, the same little blue button. I read maybe one of them carefully. I am being generous calling it "maybe."

Then on Sunday, Vercel told the world that someone at Vercel did the same thing in February.

The breach started with Context AI, an "AI Office Suite" tool that automates workflows across your apps. A Vercel employee installed it, granted it broad Google Workspace scopes through OAuth, and went back to shipping. Two months ago, malware on a Context AI staffer's laptop, the Lumma infostealer, scraped session tokens off the machine. One of those tokens belonged to that Vercel employee's account. The attacker walked into Vercel's Google Workspace, pivoted into internal systems, lifted customer credentials and source code, and put the package up for sale on a cybercrime forum for $2 million under the ShinyHunters name. By the time the Vercel bulletin landed on Sunday, the trade was already done.

Yeah. Ouch.

The "allow all" button is the new attack surface.

Here's the thing about OAuth scopes. They were designed in an era where the client requesting access was a server you ran, with engineers maintaining it, and a security team auditing it. Slack adding Google Calendar. Asana reading your GitHub. The integrations were big, slow-moving, and the trust calculus was a weekend of legal review.

That model broke this year. Quietly. I have probably granted twenty AI tools access to my work accounts in the last six months. My organization is doing the same dance, multiplied by every employee. Each integration looks tiny on its own. Stacked together they form a permission graph that nobody has actually audited, because the audit was never the point. The point was velocity. The point was "try the new agent and see if it can ship your standup for you."

Frankly, I do not know who maintains Context AI. I do not know which open-source dependencies it pulls. I do not know what their employee laptop hygiene looks like. Neither did Vercel. Neither do you.

That is the new attack surface. Not the model. Not the prompt. The permission grant.

If you came up through engineering, you have a model for this already. It is dependency hell, but the dependency is a token instead of a package, and the blast radius is whatever scopes you ticked the day you signed up. Which, if you have ever clicked "allow all," is the entire account.

Non-human identity is a venture category now, and it just got urgent.

If you have not paid attention to the non-human identity space, this is the right week to start. The term sounds like enterprise jargon because it is. NHI covers the part of the access graph that is not a human logging in. Service accounts. API keys. Workload identities. And, increasingly, the OAuth tokens that AI agents and SaaS integrations hold on behalf of humans.

Astrix has been at this since 2021. Oasis Security and Entro showed up later with similar pitches. Clutch raised on it last year. GitGuardian, the secrets-scanning company, closed a $50M Series C in February explicitly to fund their AI-agent-security expansion. The Cloud Security Alliance survey found 24% of orgs planning NHI investment in the next six months and 36% in the next twelve. That distribution moves materially after a Vercel-shaped news cycle, and it moves toward more capital, faster, in a tighter window.

The diligence question I am bringing to security pitches now is more specific than it was a quarter ago. It is no longer "do you cover NHI." It is: do you cover the OAuth tokens that agents and AI office suites hold on behalf of humans, do you alert when an integration's scope creep crosses a threshold, do you have a runbook for revoking trust at the OAuth-app layer when a third-party gets compromised. That last one is operationally hard. The product surface for it barely exists.

If you came up through engineering and you are pitching investors in this space, here is the small but real edge you have. You can read an OAuth manifest. You can tell the difference between a scope that needs to be wide and a scope that is wide because the engineer was lazy. Most investors cannot do that yet. Most founders, on the call, cannot either. The split between "the founder opens the manifest and walks me through it" and "the founder has to text the engineer who shipped that flow eighteen months ago" is becoming a real signal for me. Not the only signal. But the kind that did not exist on a diligence rubric a year ago.

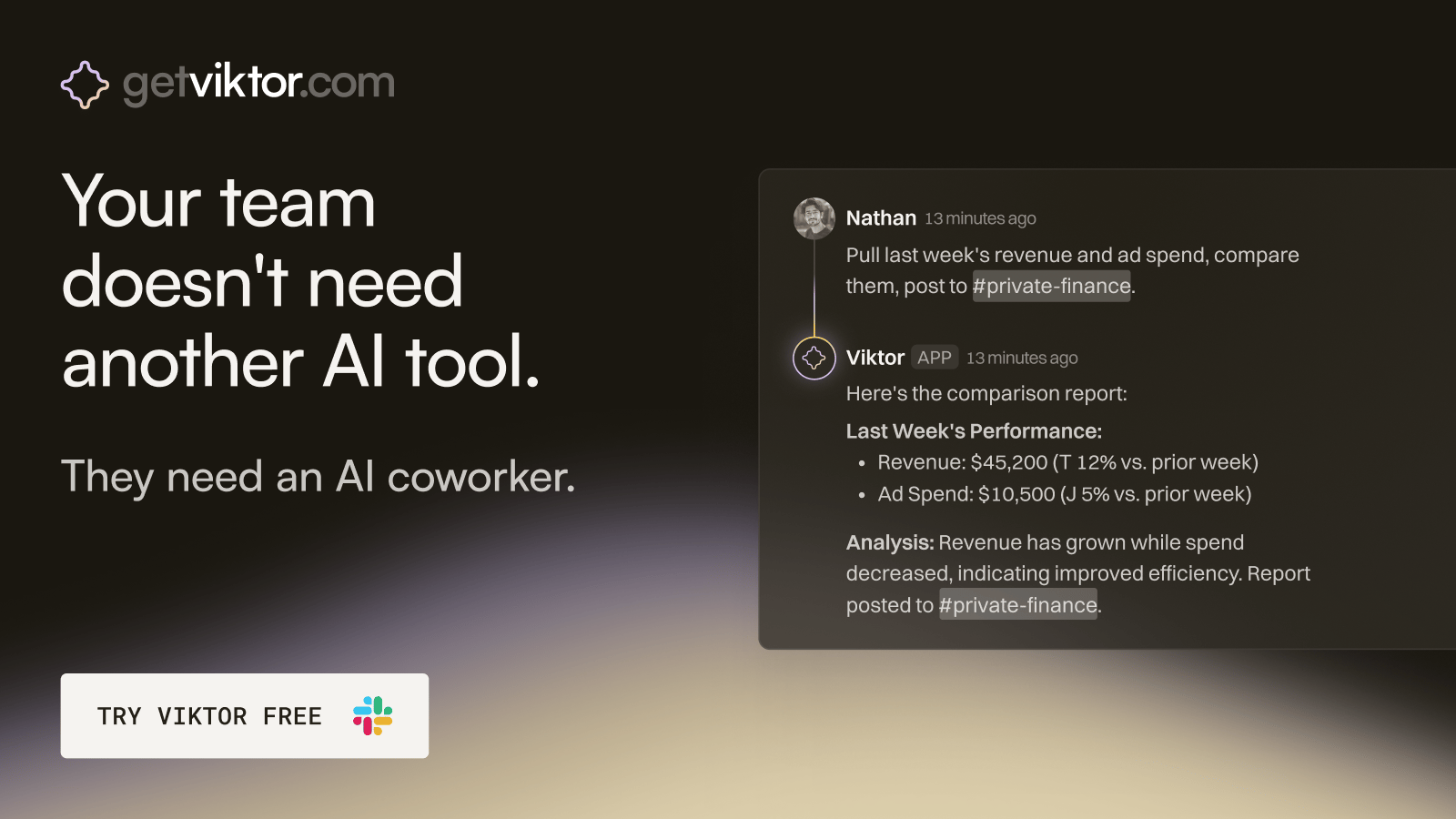

Your next great hire lives in Slack.

Viktor is an AI coworker that connects to your tools and ships real work. Ask Viktor to pull a report, build a client dashboard, or source 200 leads matching your ICP. Most teams hand over half their ops within a week.

The standards are catching up. The deployment is not.

The Model Context Protocol shipped a new authorization spec this April that requires OAuth 2.1 with resource indicators. Per-tool scopes. Short-lived access tokens. Refresh rotation. Credential isolation between dev, staging, and prod. If you read that and felt a vague comfort, do not. The spec is what good behavior looks like in 2027. What we have shipped today is closer to: I gave my note-taker access to everything, and so did you, and so did the person at Vercel.

The standards are real. The deployment is not. There is a window, possibly twelve to eighteen months, where this gap is the actual moat for the security companies in this space, and the actual exposure for everyone else.

As for me?

I went back through my Google account on Monday and revoked sixteen OAuth grants. About half were to tools I had used once and forgotten. Three were to companies that, on quick search, I could not confirm still exist as going concerns. One was to a startup that pivoted away from being a meeting bot to something I cannot find a current website for, and I do not know what is happening with my prior scope today, or who has it.

The honest read is that I would not have caught the Vercel pattern either. I am not more careful than the average engineer. I am the average engineer. I click the button because reading the modal slows me down, and the agent is supposed to be the thing that makes me faster.

That is the part I do not know how to fix yet. The economics of agentic productivity ask me to grant scopes faster. The economics of supply chain security ask me to grant them slower. Right now those two pressures point in opposite directions, and the second one only tightens after a breach.

I rotated my keys this morning. I am still going to click the button.

— SWEdonym