Today was one of those days where you have to stop and mark the calendar.

Two announcements dropped within hours of each other, and the combination is, I think, one of the more clarifying moments I've seen at the intersection of AI capability and security since I've been doing this work. First: Anthropic published the details of Claude Mythos Preview and Project Glasswing, a frontier model that can autonomously find and exploit zero-day vulnerabilities at a scale no human or automated tool has matched before. Second, and with much less fanfare: Zhipu AI released the public weights for GLM-5.1, an open-source model scoring 94.6% of Claude Opus 4.6's coding benchmark at $3 a month, trainable on Huawei Ascend chips, available to anyone with a hard drive and an internet connection.

These two things happening on the same day is not a coincidence you can ignore. I'll explain why. But first, a confession.

There's a function I wrote in 2019 that I'm suddenly thinking about again.

I won't tell you which codebase. I will tell you it involved pointer arithmetic, a buffer I assumed was always bounded, and the kind of confidence you have at 26 when you've shipped a few things without the world ending. It passed code review. It passed fuzzing. It stayed in production for two years before a very patient colleague caught it during a refactor.

I thought about that function on Thursday when I read Anthropic's announcement for Claude Mythos Preview and Project Glasswing. Not because my old bug is in scope for them. Because the researchers described, in technical detail, the kinds of bugs Mythos finds: subtle memory safety issues, assumptions about buffer bounds that hold 99.9% of the time and fail the other 0.1%, chained vulnerabilities where no single flaw looks fatal but three together open root access. Things that survive years. Things that survive automated testing. Things that survive code review.

Things like the function I wrote in 2019.

Mythos Preview found a 27-year-old bug in OpenBSD and a 16-year-old flaw in FFmpeg that survived 5 million automated test runs.

I want to pause on that for a second. Not five runs. Five million. That is not a bug that scanners miss because they don't have enough coverage. That is a bug that humans and every tool we've built to replace humans looked at, shrugged, and passed. Mythos found it anyway. It developed working exploit chains, not proofs-of-concept, actual exploits, targeting JIT heap sprays, multi-bug chains requiring 3-4 separate vulnerabilities in sequence, kernel privilege escalation via ROP gadgets. Across ~7,000 code entry points. Without human direction once the task was set.

The scale comparison that hit hardest: Mythos built working JavaScript shell exploits 181 times in testing. The previous generation of models? Twice.

That is not an incremental improvement. That is a phase change.

Anthropic's response is Project Glasswing, a defensive initiative with $100M in usage credits, $4M in direct donations to open-source security organizations, and founding partners that read like a tech industry roll call: AWS, Apple, Google, Microsoft, NVIDIA, and 40+ organizations focused on critical infrastructure. The model is available through the Claude API, Bedrock, Vertex, and Azure Foundry at $25/$125 per million tokens. No general public access planned.

The structure of Glasswing is interesting to me as a VC, separate from the security story entirely. Anthropic is building an institutional access tier, gated, credentialed, partnered with the exact companies that run the infrastructure the rest of the internet sits on. That is a different commercial motion than selling API tokens to developers. It is enterprise infrastructure, with the moat being trust and access control rather than raw capability. Worth watching as a business pattern.

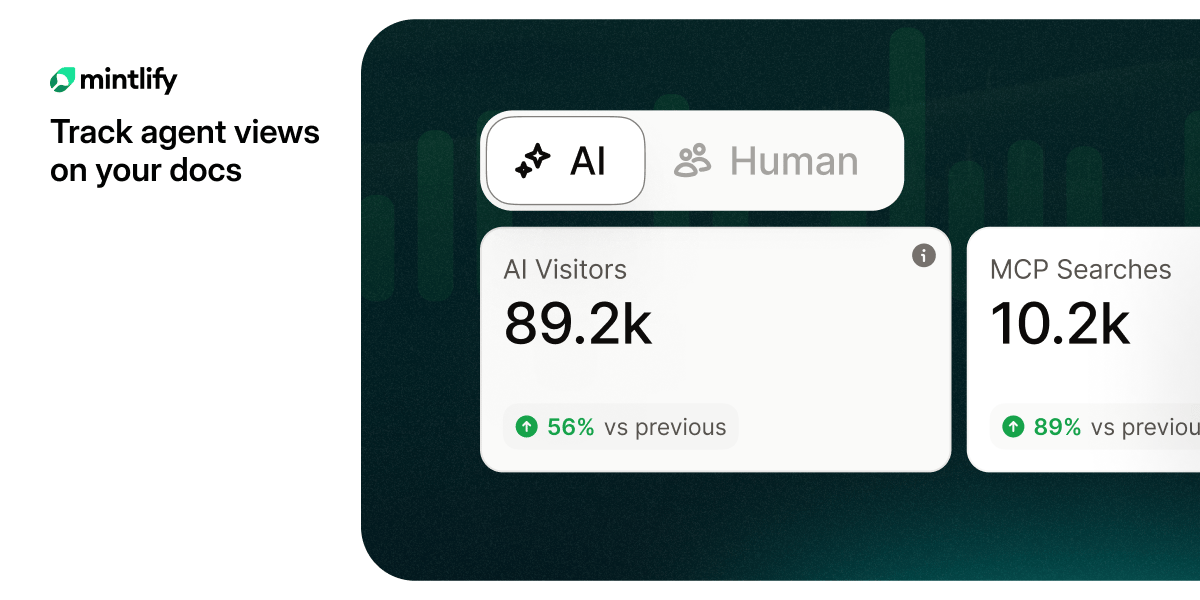

Are you tracking agent views on your docs?

AI agents already outnumber human visitors to your docs — now you can track them.

But here is where the story gets complicated, and where I want you to pay close attention.

The same day Anthropic announced a model that can find and exploit zero-days at superhuman scale, Zhipu AI released the weights for GLM-5.1.

GLM-5.1 is an open-source model from z.ai. It scores 94.6% of Claude Opus 4.6's coding benchmark performance. It costs $3 a month on the API. The weights are public, meaning you can run it yourself, on your own hardware, with no usage logging, no rate limits, no oversight. It was trained entirely on Huawei Ascend chips, no Nvidia GPUs required, which matters for anyone subject to US export controls or building in regions where the GPU supply chain is constrained.

The self-reported benchmark caveat is real: as of this writing, no independent lab has corroborated GLM-5.1's numbers. Take the 94.6% figure with appropriate skepticism. But even at 80% of frontier performance, and even accounting for the fact that "coding benchmark performance" and "autonomous vulnerability exploitation" are related but distinct tasks, the direction of travel is clear. Open-source models are climbing toward frontier capability at a pace that was not obvious two years ago.

The asymmetry this creates is the thing nobody wants to say plainly.

The defensive tools are gated. Glasswing requires institutional access, credentialing, partnership agreements. The offensive capabilities are democratizing. GLM-5.1's weights are public today. The next version will be more capable. The version after that more capable still. If you believe, as the Mythos research suggests, that model capability and vulnerability exploitation are tightly correlated, then the gap between "what the defenders can access" and "what everyone else can access" is narrowing, not widening.

This is not a new pattern in security. It rhymes with the history of exploit toolkits, with Metasploit becoming publicly available, with the professionalization of ransomware-as-a-service. The tools that sophisticated attackers use become commodity tools on an 18-month to 3-year lag. The difference now is that the capability jump is larger and the lag may be shorter.

For founders building in the security space: the threat model has changed for every company that runs software. Not in a theoretical sense. In an operationally specific sense. The assumptions your customers made when they last reviewed their attack surface, they need to review those again. The companies building AI-powered defensive tooling, continuous scanning, automated patch validation, AI-native incident response, are now selling into a customer urgency that did not exist six months ago. That is a genuine tailwind, and it compounds.

For VCs evaluating security companies: the bar for what counts as a "technical moat" in this space just shifted. A product that was differentiated because it used ML for anomaly detection is no longer differentiated if every attacker has access to a frontier-class model for finding the anomalies themselves. The question I'm asking in diligence now is not "does this company use AI?" It is "does this company's threat model account for an adversary who also has AI, specifically an adversary who has GLM-5.1 or its successor running locally on cheap hardware?"

As for me? I am sitting with something that is hard to articulate cleanly.

I spent years writing code, reviewing code, shipping systems I believed were reasonably secure. I know the culture of it, the combination of genuine effort and optimistic assumption that characterizes most production software. I know the function I wrote in 2019 is not an outlier. There are millions of functions like it, in systems we rely on, written by people who were trying their best.

Mythos found things that survived 27 years. It found things that survived five million test runs. And the capability that let it do that is, right now, being trained into models that will be open-source within a year or two.

I do not have a clean answer for what that means. I have watched the security industry deal with each previous wave of tooling democratization, and we are generally, unevenly, imperfectly still here. But I want to be honest that this one feels different in magnitude, even if not different in kind. The phase change is real. The number 181 is sitting in the back of my head and I'm not sure what to do with it yet.

Maybe that is enough for now. To name it clearly, look at it directly, and keep building anyway.

— SWEdonym