Thursday morning in Oakland, Elon Musk took the stand for the third day. OpenAI's lawyer asked, plainly, whether xAI had used distillation on OpenAI's models to train Grok. Musk said it was "partly" true. He then said it was standard practice across the field. There were audible gasps in the courtroom, according to the reporters in the gallery, which is funny because most of the people in the AI training pipeline have known this for two years.

What changed Thursday is that the sentence is now a court transcript.

I have been thinking about that transcript all weekend. Not because the admission is shocking on the technical merits, it is not, but because the legal merits of the case it sits inside are unusually load-bearing for the rest of the industry. The trial is not really about Elon. It is about whether charitable-trust law applies to a $1T-aspiring AI lab.

The quiet part is now in the record.

If you have ever trained a model, you know the shape of distillation. You sample the outputs of a stronger teacher, you fit a smaller student to those outputs, and the student converges to something that approximates the teacher at a fraction of the compute. It works. It has worked since the original Hinton paper. Every serious lab does some version of it, on some part of the stack, for some subset of capabilities. The question has never been whether labs distill. The question is which model's outputs are upstream of which model's training set, and nobody answers that question on the record.

Until Thursday. The CEO of an $80B-ish AI lab said, under oath, that his company uses a competitor's models to train its own, and that this is normal. Frankly, it is normal. The new fact is the venue. A terms-of-service complaint, an arbitration filing, or a quiet cease-and-desist now has a sworn admission to point to. If you are the legal team at OpenAI or Anthropic, you are not gasping. You are screenshotting the transcript.

Here's the thing about moats. Seed-round AI valuations have been pricing the assumption that frontier capability is hard to replicate. The agentic-leverage decks I have looked at this quarter all assume the model the founder is building on is durable for at least a couple of years. If the cost to clone a model's behavior is "rent a teacher signal and some H100s," that assumption is weaker than the price tag suggests. It does not fall to zero. The teacher signal is gated by API access, by usage limits, and by terms-of-service teeth that just got sharper. But it does fall.

I underwrite this differently than I did six months ago. The diligence question used to be: how good is the model. The new question is: which upstream model is your training pipeline implicitly riding on, and what happens to your unit economics if that upstream provider terminates your account on Tuesday.

Charitable-trust law just walked into AI policy.

The legal substance of Musk v. Altman, after twenty-four of the original twenty-six claims fell away, is two things: breach of charitable trust, and unjust enrichment. The remedy Musk is asking for is the unwinding of OpenAI's October 2025 conversion to a public benefit corporation, the removal of Sam Altman and Greg Brockman from the board, and roughly $130B in damages routed back to the nonprofit foundation. He also keeps repeating, in court, the line "you can't just steal a charity," which is a serviceable rallying cry and also a rough summary of the legal theory.

Judge Gonzalez Rogers is widely expected to deliver a partial finding: that OpenAI breached fiduciary duties to its original donor base on at least one count, while declining to order the full structural unwind. Mid-May. That is a careful ruling. It also creates a precedent that will be cited for the next decade.

The reason this matters beyond OpenAI is that the public benefit corporation form is the default structural template for this generation of AI labs. Anthropic is a Delaware PBC. Many of the alumni-led mega-seed labs that closed funding in the last two months are PBCs. The mission language varies, but the fiduciary scaffolding is similar enough that a ruling that establishes how charitable-trust duties survive a nonprofit-to-PBC conversion is a ruling that lands on more than one cap table. If you are a GC at any of those labs, you have already filed a memo this weekend. If you are a partner at a fund underwriting a PBC-shaped lab, you should have read it.

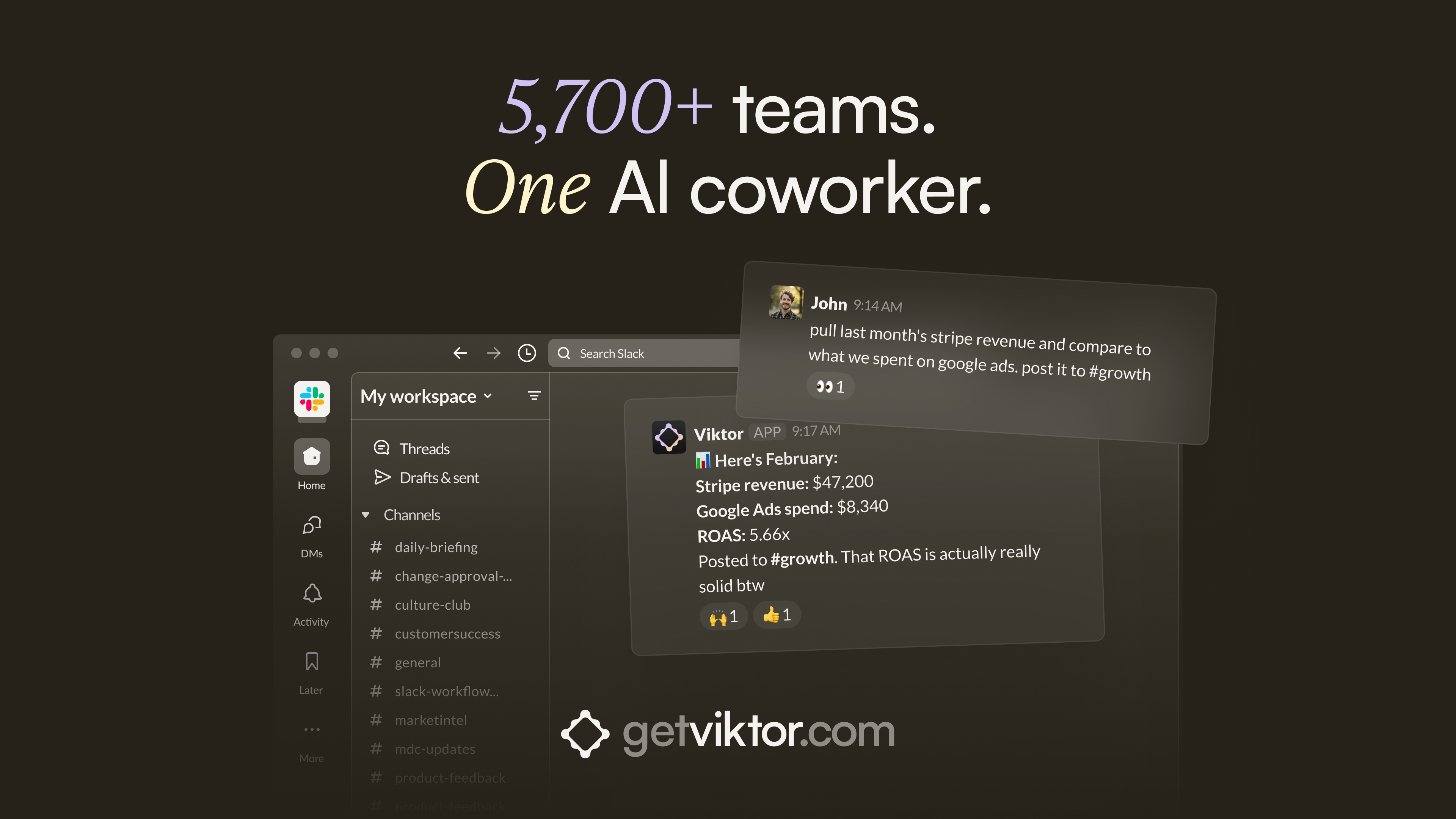

Your inventory doesn't wait for you to check a dashboard.

Viktor sends daily inventory and reorder alerts to your team's Slack channel. If a SKU is trending toward stockout, you know before it happens.

Your content calendar and social posting run on autopilot. Brand monitoring runs in the background. Viktor handles the recurring work across ops and marketing so your team focuses on growth.

5,700+ teams. 3,000+ integrations.

Musk is the loudest signal in the room and also the noisiest.

I want to be honest about how I read the optics. Musk is suing a company he co-founded, while simultaneously running a competing lab that just admitted in court to training on the defendant's models. The Washington Post piece from Saturday said the trial "is all about him," and the framing is fair. OpenAI's lawyer William Savitt has been arguing all week that Musk was never actually committed to a nonprofit structure.

Both things can be true. The optics of the plaintiff are bad. The legal question is real. Charitable-trust doctrine does not care that the person waving it is also a competitor with a chip on his shoulder. It cares whether assets donated for one purpose got redirected to another, and whether the people in charge owed a duty to the donors. That is a question with a clean answer, and the answer the court arrives at applies to every AI lab that took early dollars under a mission charter.

What I am watching this week.

The remedies phase will tell us more than the liability phase. A cosmetic remedy looks like extra reporting, an amended charter, maybe a board-level ethics committee. A structural remedy looks like a forced separation between the for-profit subsidiary and the nonprofit parent, or in the unlikely case, a partial unwind of the PBC conversion. Each is a different signal for AI lab cap tables, for the IPO calendar, and for how seed-stage diligence on PBC-form labs gets done in the back half of 2026.

The other thing I am watching is what every AI lab GC quietly tells their CEO this week. The distillation transcript is not a smoking gun against any specific company. It is closer to background radiation. But it changes the cost-benefit on the next training-data complaint, and that batch of complaints will tell us whether the moat is mostly compute access, mostly TOS enforcement, or mostly nothing.

I do not know how the case lands. I do not have a clean read on whether the structural remedy comes through. I do know that a transcript exists, in federal court, where the CEO of a frontier lab said the quiet part out loud, and that on the same record, charitable-trust law showed up uninvited to the AI policy conversation.

I would feel better about my underwriting if either of those things had happened in isolation. They happened in the same week.

— SWEdonym